Hitchhiker's guide to AI for beginners

This will be more of an FAQ-style article, which is divergent from my regular content, but I wanted to write a guide for non-technical people or people unfamiliar with AI. You hear all these buzzwords, and they may be scary or intriguing. You want to learn more, but have no clue where to start.

You are worried about AI taking your job or what an AI-powered future may look like.

If you fit into one of the above categories, then this article is for you.

PS: The Hitchhiker's Guide to the Galaxy - Wikipedia ~ Inspired by this insanely cool movie.

What is AGI?

Let’s get this out of the way first. Nobody seems to have a clear idea of what AGI actually means, but here’s a general guideline.

AGI, at its core, is a goal post shift; previously, “AI” was thought of as being the all-intelligent machine that could think on the same level or even at a higher level than human beings.

AI companies started calling their “chatbots” (I’m using chatbots loosely for now) AI, thus AI lost the meaning it once had, and hence why they coined this new term “AGI”.

AGI, therefore, simply means the following:

A machine that can understand information within the context of the real world.

A machine that can learn and adapt to perform just about any task a human can do.

Wait! Can’t ChatGPT already do this?

No, ChatGPT can retrieve information, it can generate some pretty cool answers, and answer you in a way that you could mistake it for a human, but in reality, this is just a clever algorithm. ChatGPT itself doesn’t understand the meaning of the text it generates.

Here’s an example:

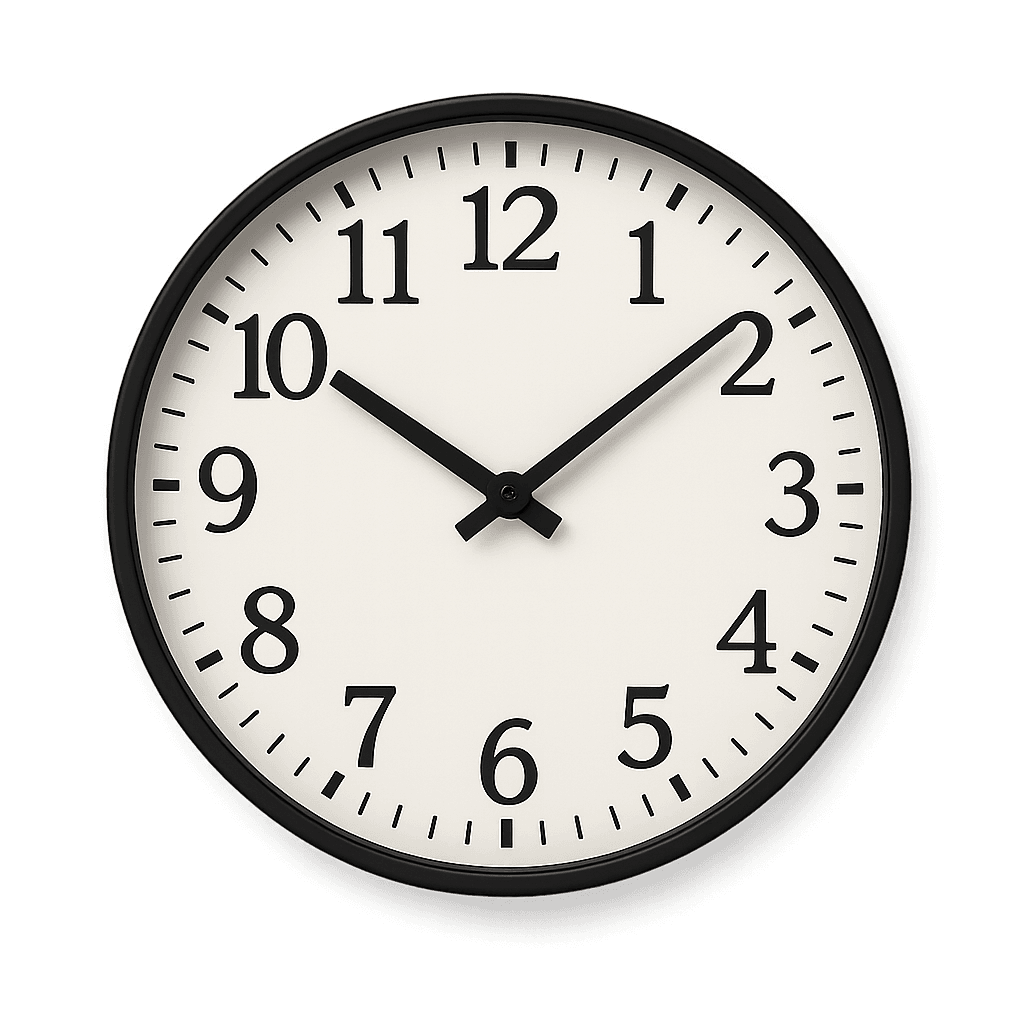

What I asked ChatGPT: “Draw me a clock with the hands showing the time as 12 minutes past 5 pm.”

What it generated:

The algorithm picked up that I want an image of a clock, but it got the time horribly wrong. Instead of responding with: “I am sorry, but I cannot draw those numbers on the clock,” or something like that, it still went ahead and drew the clock.

This is because it doesn’t fully understand the instruction; it’s looking for similar information in its vast knowledge base and just retrieving something that’s most similar. There is some fancy science going on to understand meaning to an extent, but still, it does not truly understand anything besides pattern matching.

Even after I corrected ChatGPT: “Uhm, but the time was wrong, please show the time 5:12 pm”, I still get:

AGI would therefore be aware of the question hollistically, and be able to draw the correct image, and if it can’t, it would recognise that it doesn’t have that ability, and try to learn that skill or just respond with: “I don’t know how to do that yet…”.

What is an LLM?

LLM stands for “Large Language Model”. This is basically what most refer to as “AI” or “AI Chatbots”. These are powerful programs that are trained on large pieces of information from around the web; they have a “brain” filled with billions of documents and pieces of information.

When you ask a Chatbot a question, it’s basically doing something similar to “Google Search”. The Chatbot breaks down your question into something called “tokens”, which are mathematical representations of words.

These tokens now give the Chatbot a superpower that goes beyond a simple keyword search, in that it can use math to figure out the relationship of words based on how these tokens are arranged, how far apart they are from each other, and so on.

So essentially, it takes your question and does a similarity search to find the most relevant tokens in its knowledge base, then uses those tokens together with some clever algorithmic magic to figure out the most likely answer that would resolve your query.

Now, complex LLMs like ChatGPT do a lot more magic in the background than just mathematical formulas, but at the end of the day, it’s still just a program that applies an algorithm.

What is a model?

Now that we have an understanding of how LLMs work, this is basically a continuation of the last question: “What is an LLM?”

AI companies like OpenAI spend months collecting data from various sources, and they clean that data by using various data science techniques to ensure it’s as accurate as possible.

The goal of this process is to build a knowledge base on which they can train the AI. You will notice every 6 months or so, there’s a new version of ChatGPT: “o1”, “4o”, “GPT-3”, “GPT3.5”, “GPT-5”, etc…

Training or teaching AI is an expensive venture; it takes time because we are dealing with billions of data points. Thus, a model is a program, a “black box” filled with layers and layers of information (known as neural networks).

The model provider will feed this program with the information over a period of time. The program will then store that information in its neural networks (a bit like memorizing information for an exam) and apply various mathematical calculations to make sense of the data. This process can take months; once it’s complete, the program is then frozen in time, its knowledge and understanding frozen on that dataset, thus the “GPT-5”, “o1”, etc, is just a label of the box based on which dataset it was trained on.

Furthermore, to clarify, if you trained the program when Obama was the president of the US, then asked the model, “Who is the current president? “ In 2022, it’s going to say “Oboma” or “My knowledge cut off is up until 2016, therefore I cannot answer that question”.

At every new version, the AI companies may discover new techniques and improve upon the dataset, which ultimately leads to a smarter model. This is why they name them at the end of each training cycle.

It may be possible that “GPT-5” is the smartest model at this point, but for a particular task, “GPT4o” might still perform better; this is why they don’t just overwrite the old model. It actually happened recently, where “GPT-5” answered questions more robotically; even though it was smarter, it seemed less human, thus people still preferred “GPT4o”.

While the model itself may not have memory beyond its cutoff point; most modern Chatbot systems like ChatGPT or Claude do have access to the internet and other data sources like RAG, which allows them to pull up to data information but if you discounted the model from these sources, it’s going to respond with the dataset version of it’s information.

What is hallucination?

I am sure you have experienced this before; if not, you will at some point when interacting with a chatbot. You ask the bot a question, it gives you a long, detailed answer full of confidence, but when you fact-check the answer, it’s either completely or partially incorrect.

Helliplication is basically when the algorithm predicts the answer incorrectly, which is bound to happen with current models, regardless of how smart they are.

What is RAG?

I covered this extensively throughout my blog with coding examples as well, so I won’t dive too deeply into this subject matter, but will just give you a general idea of what this means. If you are interested in a more technical understanding, please check out one of my earlier articles: How to build a PDF chatbot with Langchain 🦜🔗 and FAISS

RAG stands for “Retrieval Augmented Generation,” which is a fancy way of saying that you can supplement the model’s memory with your own dataset.

For example, if I had to ask ChatGPT, “What is the price of the latest iPhone?” It gives me pricing in dollars and information from foreign sources. I am South African, and I’m more interested in the “Rand” price and local stores.

To fix this, I can upload a PDF via the ChatGPT interface detailing the information from local retailers, and then re-ask it the same question, and tell it to find the answer from that PDF. This time round, it’s going to give me the correct answer based on the information in that PDF.

This is incredibly useful to websites that use Chatbots, because if I have an e-commerce store, I can build a RAG system that feeds product data from my store to the Chatbot; thus, even though I’m still using ChatGPT via the API, I can scope its response to only come from my RAG information and suggest only products from my store.

Can you imagine being on takealot.com, and then getting product recommendations from amazon.co.za? This will be a disaster, giving a competitor free advertising!

Therefore, RAG allows us some control of the Chatbot’s knowledge retrieval.

Inference and other common terminology

Some common terminology you’ll often hear:

Tokens: When models ingest your prompt, they don’t read it as a sentence or word; instead, every word gets translated into a mathematical representation, and these representations are known as tokens. 1 token roughly equates to 4 characters, but this may vary depending on the word or phrase.

Epoch: An epoch is one complete pass through your entire dataset. You normally would train the model via more than one epoch, because the second, third, fourth times, etc. It sees the data, it can apply optimizations, and improve its learning. Of course, too many Epochs can hurt accuracy, so this requires some balancing efforts.

Inference: is when you actually ask the model a question, so this is a trained model, and each time you “prompt” the model, it needs to convert your request into tokens and do a whole bunch of math to determine the final response back. This process does require GPU resources as well, but it is not as computationally expensive as training.

Model weights: After training a model, it generates something called “weights,” which are basically mathematical patterns derived from your training data. Since models are essentially predicting the next best word, phrase, or sentence, they use these weights to influence which sentences or words to return based on your input prompt.

Neural network: Inspired by the human brain, which is made up of millions of neurons. Let's call them "micro-computers" that arrange together to form a chain, a network, and together these "micro-computers" can digest, analyze, and parse information in an intelligent way, leading to some outcome like making you smile, or crying, or running, etc. In the same way, models have neural networks made up of many layers, and each layer processes and transforms the information before passing it to the next layer. Collectively, they work together to build complex patterns and ultimately generate tokens that we get back as text or images, or even video.

Model parameters: You’ll often see “7B”, “12B”, “24B”, 70B etc, next to model names. These refer to the number of parameters a model contains (in one billion units, so 7 billion, 12 billion, etc). Parameters are simply the number of weights and neural connections the model contains in its neural networks. Usually, the more parameters = smarter model, but this is not always the case because various factors can influence intelligence, including training process, quality of the dataset, and so forth.

What fine-tuning?

Like OpenAI or Anthropic, you too can train your own model on your own dataset. Usually, it would be crazy expensive to start from scratch, so it’s much easier and more cost-effective to just take an existing model and train it further using your own data.

This process will add or replace knowledge in the existing model to make it behave in a certain way. Currently, if you talk to Claude or ChatGPT, it’s conversational, but you may want a model that just follows a particular sequence.

This can be useful for restaurant ordering or booking car repair appointments, or just about any kind of niche task. You can give the model a few hundred / thousand examples, teaching how you want it to respond, and then the model will follow your examples rather than generic answers.

The benefits of fine-tuning:

Faster inference. With RAG, you need to query a vectorstore, then pass that information to the model. This could add some speed overhead to responses. With Fine-tuned models, you don’t necessarily need to fetch extra data since everything is already baked into the model (you can still use RAG with fine-tuned models if needed).

Cheaper inference. Not always the case, but for niche tasks, you could use much smaller models like “tinyllama” which can run on smaller GPUs.

Better performance. While RAG works well, it doesn’t always lead to accurate results. With fine-tuning, you could get much more accurate responses depending on your data and use case.

Will AI take my job?

While AI can automate some tasks and help humans speed up their workflow, AI is not a drop-in replacement for human beings, purely because of the distinction made in my first point of “AGI”.

I am not saying there won’t be job losses; of course, with every new platform shift, there will always be a percentage of jobs no longer needed. In most of these cases, it’ll just require upskilling and pivoting of the individuals, rather than mass unemployment.

For example, in the past, you would need a frontend developer to design landing pages. With tools like Claude code, you could potentially spin up a world-class landing page in minutes without a frontend developer.

Does this mean the end of all frontend developers? No! Not all websites are simple landing pages; some need multi-stage forms, integration with legacy systems, and complex workflows. AI is simply not smart enough to handle all of these.

Thus, these developers would probably have more time to actually sit with clients or expand their skills into other domains like backend development, or even end up making way more money! Because they can automate more of their job, thus allowing them to push out more projects in a shorter space of time.